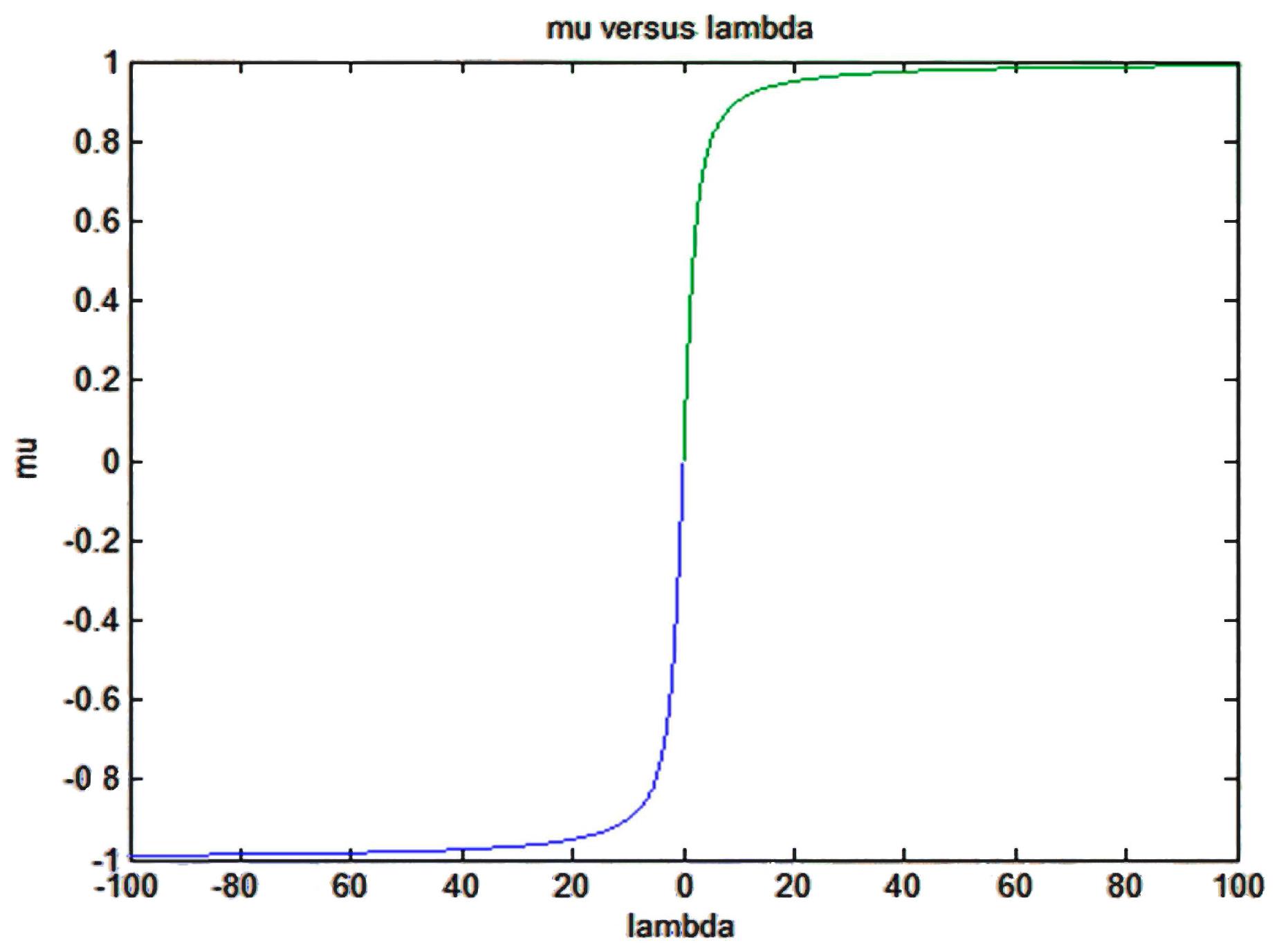

p l = 0.4 since there are two observed “l”s.p H = 0.2 because “H” was observed 1 time in 5 characters, and 1 / 5 = 0.2.p i represents the proportion of each unique character i in the input X. The part inside the summation is the most important part of the equation, because this is what assigns higher numbers to rarer events and lower numbers to common events. Think of summation as a loop which iterates over each unique character in a string. The sigma ( ) represents a summation that sums up for values from i = 1 to n.H is the symbol for entropy, and X is the input data, so H(X) means “The entropy of data X.”.This is the formula for calculating information entropy:Īt first glance this looks fairly complex, but after defining the terms involved it becomes much less mysterious. Since then, entropy has found many uses in different aspects of modern digital systems, from compression algorithms to cryptography. In that year, Claude Shannon published the famous paper A Mathematical Theory of Communication, which defined the field of information theory and, among other things, the idea of information entropy. It wasn’t until 1948 that the concept was first extended to the context we are interested in. The term entropy was first used in thermodynamics, the science of energy, in the 1860s. In the context of digital information, entropy-specifically information entropy-is typically thought of as a measure of randomness or uncertainty in data. While this term is probably not new to you, the meaning of entropy depends on the context in which it is used. Furthermore, you are probably familiar with Shannon entropy and how it is used to measure randomness. If you have worked in InfoSec for long, you have no doubt heard the term entropy. Ready? Let the math begin… What Is Entropy? Because some families of malware use domain generation algorithms that change domains frequently, blacklisting these types of domains is not an efficient means to protect environments. I will then show how relative entropy can be utilized against letter frequency patterns in domain names to identify malicious ones. I’ll first explain what is typically meant when people talk about entropy (Shannon entropy) before moving on to a similar formula (relative entropy) which has better applications in information security. In this article, we’ll dig into the possibility of using entropy in threat hunting to help identify adversarial behavior. This is the category entropy falls into: looking at a known technique (randomized data to thwart atomic indicators) to find both known and unknown malware. Behavioral indicators are the next level, which use knowledge of adversarial techniques to find both known and unknown activity. “Antivirus is dead” is a common refrain in the information security space, but if you look below the surface, what it really means is “atomic indicators are dead.” While there is value in static indicators, they are the bare minimum standard for detection these days and suffer from numerous drawbacks. Minimize downtime with after-hours support.Train continuously for real world situations.Operationalize your Microsoft security stack.Protect critical production Linux and Kubernetes.Protect your users’ email, identities, and SaaS apps.Protect your corporate endpoints and network.Deliver enterprise security across your IT environment.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed